30 Apr 2026

AI took massive leaps in 2025. But while the pace of technological progress has eclipsed decades of prior development, panellists at a recent Engineering and AI webinar hosted by Engineering New Zealand agreed that most engineering organisations are still only scratching the surface of what AI can deliver in practice. With AI evolving almost daily, 2026 is expected to bring even more transformative change for the profession – but is it ready for what’s coming?

AI took massive leaps in 2025. But while the pace of technological progress has eclipsed decades of prior development, panellists at a recent Engineering and AI webinar hosted by Engineering New Zealand agreed that most engineering organisations are still only scratching the surface of what AI can deliver in practice. With AI evolving almost daily, 2026 is expected to bring even more transformative change for the profession – but is it ready for what’s coming?

What changed for engineers in 2025

In 2025, engineers became more comfortable using AI tools such as Copilot for time-consuming and complex tasks, particularly comparison and checking. This included verifying compliance against project and design requirements, business standards, client specifications and relevant codes, as well as highlighting areas that required clarification or further review.

There has also been a marked improvement in the reliability of AI outputs. Tools are becoming more dependable and more deeply integrated into everyday engineering workflows.

This is already having a real impact through time saved, reduced rework and improved productivity. It has also improved the quality of outcomes and client satisfaction.

While hallucinations have reduced, panellists were clear that there has always been – and will always be – a need for a human in the loop: someone who understands AI’s limitations and can make professional judgements about whether outputs are accurate, relevant and appropriate.

From assistants to agents: what’s coming in 2026

Looking ahead to 2026, panellists expect a rapid escalation in AI capability, with meaningful advances occurring almost daily. Justin Flitter, from AI New Zealand – a consultancy that helps organisations understand where, why and how to leverage AI – has seen this momentum firsthand.

He has observed strong demand from the construction and engineering sectors over the past 18 months and says there is significant opportunity to drive innovation and improve workflows.

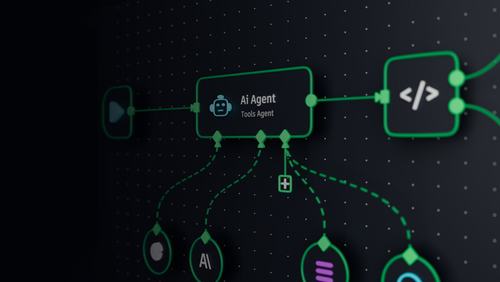

“One of the most impactful developments has been the shift from simple chat interfaces to agents that codify workflows and automate more complex tasks,” he says. “AI platforms have shifted from being assistants we talk to, to workers we can delegate to.”

Slow uptake, despite clear capability

So, if the technology is there, what is holding businesses back from embracing AI?

For Lauren Smith, AI partnership leader at global engineering and consulting firm Mott MacDonald, the barriers to adoption are less about capability and more about confidence. Key concerns include accuracy and reliability, data privacy and security, regulatory compliance, integration with legacy systems, and the availability of training and support.

These concerns have been sharpened by recent high‑profile examples where AI‑generated content introduced errors into reports by consultancies. While the underlying analysis was sound, the incidents reinforced the importance of the governance, human oversight and clear accountability needed for the responsible use of AI in professional practice.

Rakesh Kumar, CEO of Technology Connexions and chair of the Institute of Electrical and Electronics Engineers’ (IEEE’s) DataPort and the Future Directions Committee, notes that every wave of automation has raised similar fears but ultimately lifted productivity and advanced the state-of-the-art.

“AI can help remove bottlenecks and allow engineers to achieve more, deliver better results and ultimately add more value,” he says. “That benefits the individual, their managers and the company as a whole.”

For Lauren, the focus should be on separating hype from value.

“It’s not too late to get into AI – curiosity is the most important starting point,” she says. “If we invest in trust, governance and training, and bring people along on the journey, adoption will follow.”

The risk of doing nothing, or doing it badly

However, Justin cautions that opportunity alone is not enough. Without the right infrastructure architecture and safeguards, organisations risk doing more harm than good.

One of the biggest risks of not embracing AI, he says, is shadow AI – where employees turn to personal accounts or unapproved tools because their organisation has not provided safe alternatives.

“That leads to IP leakage and data loss,” he says. “Enterprise-grade tools like Copilot operate within existing security and permissions frameworks, which is far safer than ad hoc use of free tools.”

But he warns that simply rolling out Copilot is not the same as “doing AI”, and that there’s a fine line between successful and stalled implementation.

“That’s like giving someone a Maserati without teaching them how to drive,” he says. “Without skills-based training, tools don’t deliver value and adoption stalls. AI shouldn’t be theatre – it needs to be tied to outcomes people are accountable for. If it doesn’t help someone do their job better, faster or more safely, it won’t stick.”

A responsible path forward: A real-world case study

Lauren explains how Mott MacDonald has approached these challenges through its bespoke AI assistant, Every Mott MacDonald Answer, or EMMA.

Trained on the company’s own data and knowledge base, EMMA enables high-quality information retrieval across the business, allowing engineers to quickly source relevant insights from Mott MacDonald’s extensive technical knowledge base and about the company’s internal processes and workflows.

“Being able to find the right information quickly is significantly reducing time spent searching and reinventing the wheel,” she says.

EMMA can surface everything from internal procedures to technical knowledge and colleagues with relevant expertise. In doing so, it democratises secure access to trusted information across the organisation and reduces second guessing.

“EMMA gives us a different way of interacting with our data to get answers faster,” Lauren says. “We’re also using tools that support decision-making at scale – for example, exploring design options, ways to reduce embodied carbon in a given project or model, or validating structural alternatives against requirements more quickly than a human could alone.”

Why data, infrastructure and governance matter

For Justin, organisations that use AI to securely store, organise and retrieve institutional knowledge will gain a clear competitive advantage.

“We're no longer competing on headcount – we're competing on capability,” he says. “The question is: where does your knowledge live, and how easily can AI access it? If anyone in a business can ask a question and get a trusted answer quickly, that accelerates decision-making and value delivery.”

Rakesh agrees. “AI outputs are only as good as the data and the models behind them. Data ownership, infrastructure and governance are all critical. Organisations need to be clear about what data is shared, how it’s protected and how it’s used.”

A significant opportunity lies in capturing organisational knowledge that currently exists only in people’s heads.

“By recording meetings, transcribing discussions and storing that information properly, in the right place, you’re effectively downloading intellectual property into a shared system,” Justin says. “That builds resilience and continuity – organisational knowledge shouldn’t walk out the door when someone leaves.”

“Businesses should absolutely be using AI, at the very least to record meetings, take notes, and download, store and categorise the intellectual property and information that comes from human interactions.”

This approach can also shorten learning curves for graduates and early career professionals, providing access to environments where they can learn, test judgement and build intuition.

Human in the loop

None of this removes the need for professional responsibility – a cornerstone of engineering practice.

Rakesh is clear that AI will not replace engineers but will elevate the profession as a whole.

“I want to dispel the myth that AI will take jobs away,” he says. “History shows that each wave of automation has ultimately made individual roles broader, deeper and more impactful, while unblocking creativity. The key is preparation, re-skilling and adaptability.”

“If you use an answer that AI gives you, you are responsible for it,” he adds. “AI doesn’t remove responsibility – the engineer always owns the outcome and must be able to validate it. We should be using AI to move up the value chain, not to step away from judgement.”

That makes critical thinking, creativity and intuition more important than ever, and places the onus on engineers to continue upskilling and broadening their expertise.

From capability to adoption

Lauren acknowledges that adoption still lags behind capability. Research shows that while engineering and architecture rank highly in theoretical AI capability, they lag in observed adoption.

At Mott MacDonald, which has been recognised as a ‘frontier firm’ by Microsoft for its use and implementation of AI, trust in EMMA (Mott MacDonald’s agentic AI assistant) comes down to transparency, reliability and repeatability.

“We’re testing the agent constantly. As a user, you know where it’s pulling data from and can understand its limitations,” she says. “Knowing when you can trust the tool – and when a human needs to be in the loop – is crucial.”

Ultimately, she says, success depends on being able to demonstrate tangible impact.

“We focus strongly on building human capability – giving engineers training, tools and the freedom to implement and test AI responsibly. Engineers understand their workflows best. Empowering them is what makes AI adoption succeed. The challenge now is not what AI can do, but how responsibly we implement it.”

Looking ahead, panellists expect more tools trained specifically for engineering use cases, moving beyond text-based outputs into visual and design applications such as layout generation and scenario modelling.

Justin ends with a caution. “If every employee has multiple AI agents supporting their work, organisations will need to manage those agents just like a workforce,” he says. “Who maintains them? Who updates their skills? Who ensures they operate responsibly? Getting that infrastructure right will separate leaders from laggards.”

This article was supplied by Mott MacDonald. While we are pleased to share their insights, Engineering New Zealand does not verify all claims and does not endorse specific products or services.

About Mott MacDonald

Mott MacDonald is an employee-owned engineering, management and development consultancy, with more than 20,000 people in over 50 countries. We plan, design, deliver and maintain the transport, energy, water, defence and security, and buildings infrastructure that is integral to people’s daily lives. Our core strength is using our expertise to overcome complex challenges, for the benefit of our clients and the communities they serve.

For more information, contact Lauren Smith at Lauren.Smith@mottmac.com