12 Sep 2023

With one engineer well versed in artificial intelligence saying he’s “never seen a step change in technology as rapid as this”, what do engineers in Aotearoa need to know, what can they harness, and what should they avoid?

Artificial intelligence (AI) has been around for decades, but was again thrust into the spotlight with the release of ChatGPT in November 2022.

“We’ve had AI in the background for a long time, but it was more in the realm of experts. It’s now suddenly moved into the public domain and has become vastly more accessible,” says Dale Carnegie MEngNZ, Professor and Dean of Engineering in the School of Engineering and Computer Science at Te Herenga Waka—Victoria University of Wellington.

“I have never seen a step change in technology as rapid as this. We’re in a massive paradigm change.”

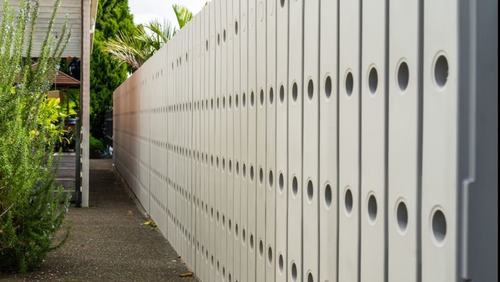

Aotearoa’s infrastructure can benefit from AI. Engineering consultancy Beca is harnessing AI to help manage assets and infrastructure including roads, bridges and utilities. They’re employing computer vision and machine learning to detect, identify and classify problems – including bridge cracks, wastewater pipe defects and roadside kerb and channel defects – from images and videos captured via high-resolution cameras, scanners on vehicles and AI-enabled drones.

Example of a machine learning image detection scenario for a bus shelter project. Image: Beca

“We draw on the big datasets of assets that we have and use our engineers familiar with those assets to test and train models to spot issues with the assets,” says Jack Donaghy, Digital Innovation Leader – Transportation & Infrastructure NZ at Beca.

Benefits from AI-driven solutions here include improved safety, less traffic disruption and savings in time, money and resources.

“It can lead to a lot of predictive maintenance, and we can have better asset management systems and do better forward work planning for assets,” Jack says.

“It’s more accurate as well. It’s not subjective and it’s a consistent output.”

AI will need a huge industry behind it, so there will be a large number of jobs required to support it.

“The jobs won’t go away”

Yet the proliferation of AI technologies is sowing seeds of doubt, leading engineers to question if they could lose their jobs to AI. Dale Carnegie has reassurance.

“The jobs won’t go away. AI will need a huge industry behind it, so there will be a large number of jobs required to support it. We’re going to see a further movement into the high-tech side, and it’s going to increase the return on value that we have,” he says.

And rather than a loss, it would likely be a shift, with AI enabling engineers to do their work more efficiently, resulting in a step up to higher-level roles.

“Engineers will move on to higher-value tasks as well,” says Jack. “Many are using their skills and knowledge to train the models and use good data to manage assets better. They won’t be doing boring, repetitive tasks — their time will be better spent looking at the bigger picture and getting more done in the industry.”

Even junior engineers can add more value.

Jack says, “What we’re finding with our young engineers coming out of university is that they have digital backgrounds. AI can empower them and it can accelerate their development and allow them to focus on higher value activities that benefit the community.”

Don’t “blindly trust” AI

Despite its advantages, AI does come with risks. Earlier this year, Geoffrey Hinton, touted as the “godfather of AI”, stepped down from his post at Google over concerns of bad actors possibly exploiting AI, as well the existential risk it poses. Alongside other AI experts, he also warned that artificial intelligence could lead to extinction. Privacy and bias are also of concern.

“If you’re Māori, a person of colour or part of a minority group, you will be discriminated against with these systems,” says Dr Karaitiana Taiuru, a Māori Data and Emerging Technologies Ethicist and Kaupapa Māori Researcher.

Avoiding bias requires involving Māori in decision-making processes.

“It’s important that we have Māori developers and ethicists and Māori in governance and management,” Karaitiana says.

“We are at a crossroads right now. We can go left and keep the status quo of colonisation and discrimination.

Dr Karaitiana Taiuru says AI chatbots such as OpenAI’s ChatGPT are prone to “hallucinations” which occur when they produce false or incorrect outputs.

Or, we can turn right and harness the power of AI to empower Māori and minorities and decolonise the biased data we already have, but it’s not possible at this stage because of the lack of engagement.”

Engineers working with iwi on projects that use AI, must include and partner with iwi and Māori and understand mātauranga Māori, Karaitiana advises, as well as honour the Māori view of data as a living tāonga with immense value.

“We have traditional knowledge, but a lot of iwi and Māori aren’t going to just want to share all their knowledge straightaway,” he adds.

Where it’s really dangerous for engineers and the industry is if they are relying on AI tools and just accepting the outcome from that.

“You’ll need to build up trust, and don’t think that your ideas are more superior than iwi ideas — there needs to be a good balance for everybody.”

Additionally, AI chatbots such as OpenAI’s ChatGPT, Google’s Bard and Microsoft’s Bing search engine are prone to “hallucinations” which occur when they produce false or incorrect outputs.

“Where it’s really dangerous for engineers and the industry is if they are relying on AI tools and just accepting the outcome from that. AI can be wrong — don’t blindly trust it,” emphasises Dale.

To mitigate this risk of inaccuracy, he advises embedding fundamental knowledge in engineers so they have the capacity to reason about, make sense of, question and critically challenge the decisions and results coming out of AI. Establishing engineering standards and regulating engineers are also crucial.

“That tells me that a company is not going to blindly employ AI to do something if the company can then be found liable for a defective construction. And we’ve got very strict building codes, so we know that regardless of whether we’re using AI as a tool, in the end it’s going to have to satisfy those requirements,” Dale says.

Technical upscaling and regular training can also help increase awareness of these risks and enable engineers to keep up with advances in AI.

“We often have domain-specific workshops but we have fewer about the risks involved in AI. That’s something we need to have with ongoing education for our engineers,” says Dale.

Regulation required

While the Privacy Commissioner has issued guidance on the use of generative AI for agencies and businesses, the country currently lacks AI-specific legislation.

“I’d like to see the New Zealand Bill of Rights Act, Human Rights Act, Privacy Act and intellectual property legislation all updated to include AI and ensure it won’t do harm to communities,” Karaitiana says.

“We need Māori at the table legislating. This is a real opportunity for our country to be more equitable for all minorities.”

The challenge, however, is the swift pace of developments in AI.

“Regulation is lagging far behind because the technology is outstripping people’s ability to create the policies around it,” explains Dale. This means that the responsibility falls on engineers to ensure they’re using AI safely.

“The onus is on people in engineering domains to really know what their state of the art is,” he says.

“And it’s not new territory – we’re always developing and testing models, so we have the ability to do that critical analysis. We just have to be careful about how much confidence and faith we’re putting in AI.”

AI central to new Fellows’ careers

Photo: Wellington Free Ambulance

Two new Te Ao Rangahau Fellows are from Te Herenga Waka—Victoria University of Wellington’s School of Engineering and Computer Science. Associate Professor Dr Yi Mei FEngNZ, and Professor Bing Xue FEngNZ, Deputy Head of School of Engineering and Computer Science, Deputy Director for Data Science and Artificial Intellegence, share their thoughts on AI.

How has your work contributed to the development of AI in Aotearoa?

Dr Yi Mei: My research mainly focuses on using AI to assist complex decision-making processes. An example is a project we are working on with Wellington Free Ambulance, which uses AI to help dispatchers make real-time ambulance dispatching decisions. Our preliminary approach, implemented with a controlled test environment utilising simulated dispatch scenarios, suggests we may be able to significantly reduce real-world response times.

Professor Bing Xue: My work contributes mainly to fundamental research and real-world applications of AI in various areas of New Zealand’s primary industry, in collaboration with Cawthron, Plant & Food Research and other organisations. As the Programme Director and Deputy Head of School, I co-led the AI group to establish the first postgraduate qualification and undergraduate major in AI in Aotearoa.

How can Kiwi engineers keep up with developments in AI?

Dr Yi Mei: The current AI development is happening so fast for people to keep up with. But the good news is that the latest AI tools are user-friendly for engineers. You don’t even need to know programming to use AI as tools (for example, the latest Windows has embedded ChatGPT in many of their products). I think the latest trend in AI is large language models and generative AI, which will enable AI to “create” instead of just “mimic” humans, with the corresponding ethical issues raised to be addressed. Attending AI events and workshops will be very helpful.

Professor Bing Xue: Stay connected and collaborate with each other. New Zealand is a small country but has a group of excellent AI researchers and practitioners. The Artificial Intelligence Researchers Association, AI Forum of New Zealand and annual AI workshops provide good opportunities for people to exchange ideas and create opportunities for AI in engineering. Being aware of the ethical considerations and challenges surrounding AI development, deployment and governance is another critical point, which is probably as important as the AI solutions themselves.

What do you think we’ll be doing with AI in five years’ time?

Dr Yi Mei: We are currently at a crossroads. On one hand, we can expect AI to make a dramatic change in our work and lifestyle, as ChatGPT is doing. On the other hand, there could be serious ethical issues if AI is misused.

For example, in five years’ time, you may have a personal AI assistant that learns from your past decisions and make your favourite work and life recommendations. However, this might not be ethical if past decisions are biased, so AI ethics and regulations are becoming increasingly important.

Professor Bing Xue: I’m positive about the potential of AI and how it can help society. Last year, most people did not know ChatGPT would affect the work and lives of so many people. I’m sure there will be new AI tools appearing in the next five years that could change the way we are doing things as much as what ChatGPT is doing.

This article was first published in the September 2023 issue of EG magazine.